AI, ML, Generative AI — What's the Difference?

A three-minute fix for the confusion that makes every AI conversation harder than it needs to be.

Here’s something that happens in approximately 100% of AI conversations:

Someone says “AI” and means one thing. Someone else hears “AI” and pictures something completely different. A third person uses “machine learning” like it’s a synonym. A fourth throws in “generative AI” for good measure. Nobody corrects anybody. The conversation continues. Nothing useful gets decided.

This is not a minor communication problem. It’s the reason so many AI conversations — in meetings, in the media, in your head at 11pm reading yet another think piece — feel simultaneously overwhelming and strangely empty.

The words are doing too much work. Let’s fix that in three minutes.

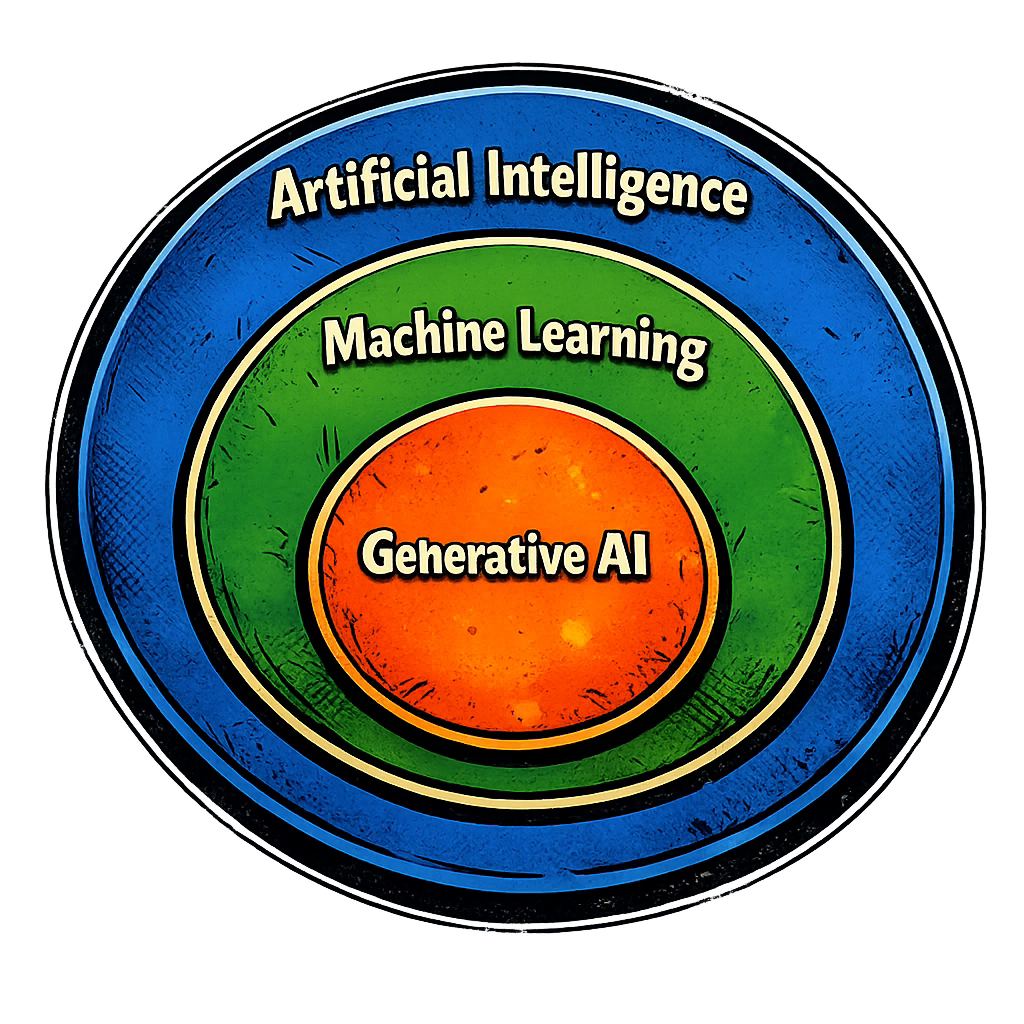

Think of It as Three Nested Circles

Not a timeline. Not a hierarchy. Three circles, each one sitting inside the next.

The biggest circle: Artificial Intelligence

AI is the broadest possible category. Any software system that does something a human brain could do — reading, writing, recognizing patterns, making decisions — counts as AI.

That’s the whole definition. Notably absent: consciousness, understanding, feelings, actual thinking. None of that is required. A spam filter that learned to recognize junk mail is AI. The thing recommending your next Netflix show is AI. (Debatable whether that one is working, but technically,AI.) ChatGPT is AI.

Very different things. Same circle.

The middle circle: Machine Learning

Machine learning is a subset of AI — a specific approach to building it.

The old way: write explicit rules. If the email contains “Nigerian prince,” mark it spam. You wrote the rules, the system followed them, and it was only as smart as the rules you thought to write. Which meant it was only as smart as you. Which is a problem.

Machine learning flipped that entirely. Instead of programming rules, you show the system millions of examples and let it figure out the patterns itself. You don’t tell it what spam looks like — you show it 10 million emails labeled “spam” and “not spam” and let it work out the difference.

The result is a system that handles situations its programmers never explicitly anticipated. Because it didn’t learn rules. It learned patterns.

This is the approach that powered everything happening in AI right now. If something in AI seems actually impressive, machine learning is almost certainly why.

The smallest circle: Generative AI

Generative AI is a subset of machine learning — and it’s the circle containing ChatGPT, Claude, Gemini, and every tool you’ve heard breathlessly discussed for the last two years.

What makes it “generative” is exactly what the name says: it generates new content. Text, images, code, audio. It doesn’t retrieve stored answers or look things up in a database. It creates something new each time, based on patterns learned from an enormous amount of existing content.

And if the prediction game and AI conversations are still fresh — yes, that’s the same mechanism you already know. AI doesn’t “know” anything — it predicts. One token at a time, probability distribution, next word, repeat. Generative AI is machine learning applied specifically to the problem of generating new content from patterns. The circles connect.

Why This Actually Matters (Especially Right Now)

If you’ve been hearing “AI is going to replace everyone” and feeling a knot in your stomach — this is the part that starts untying it.

The practical reason to keep these three circles straight: when someone says “AI is going to do X,” the claim means something very different depending on which circle they’re standing in.

“AI can detect cancer in medical scans better than radiologists” — that’s machine learning applied to image recognition. Narrow, specific, trained on millions of scans to do one thing extremely well. Actually impressive. Demonstrated.

“AI will write all our marketing copy” — that’s generative AI. Different tool, different strengths, completely different limitations, and a conversation that requires a human with judgment involved at every step.

Collapsing both into “AI” makes them sound equivalent. They aren’t. One is a scalpel. The other is a very fast, very fluent first-draft machine that needs you to finish the job. Same word. Wildly different implications.

Most of the fear-based headlines don’t make this distinction. Once you do, the conversation gets a lot less scary and a lot more useful.

Next time someone says “AI will change everything” in a meeting — and someone will — you now know the first question to ask: *which circle are you talking about?*