Become AI

You already know how to do this. You just don't know you know.

In the last post, I told you that AI doesn’t know anything — it predicts language patterns. One word at a time, based on probability, until a response is complete.

That was the explanation.

This is the experience.

I’m not going to explain anything else yet. Just play along.

Round 1: The Easy Ones

Peanut butter and _____.

Salt and _____.

Romeo and _____.

Once upon a _____.

Happy birthday to _____.

Notice what just happened. You didn’t decide anything. The words just arrived — automatically, instantly, like they were queued up waiting for you.

That’s because they basically were.

Here’s what the probability distribution on those phrases looks like:

Peanut butter and...

▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓ jelly ~95%

▓ jam ~3%

anything else ~2%Once upon a...

▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓ time ~97%

anything else ~3%Nearly everyone says the same word — because in the vast ocean of text these phrases appear in, they’re almost always followed by the same completion. The pattern is so dominant it doesn’t feel like a choice. It just feels like the answer.

This is what AI experiences on high-predictability prompts. One word dominates the probability distribution so heavily that the outcome is essentially predetermined. You didn’t calculate anything just now — and neither does the model. When the pattern is this strong, there’s nothing to calculate.

Round 2: Now It Gets Interesting

The project was _____.

The meeting was _____.

I feel very _____.

Our client wants _____.

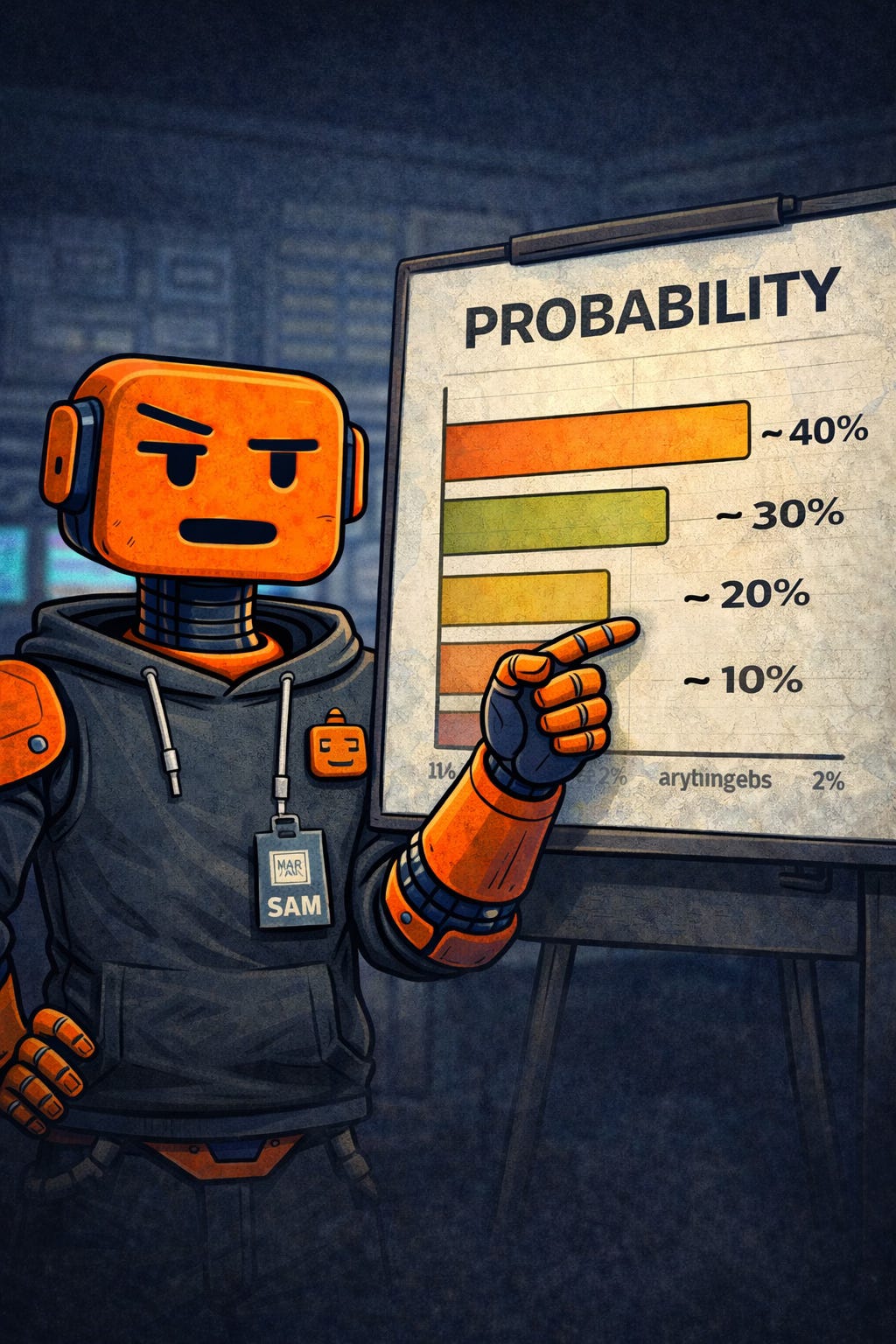

Different this time, right? Several words probably surfaced at once — and you found yourself actually choosing between them.

Here’s why:

The meeting was...

▓▓▓▓▓▓▓▓▓▓ long ~40%

▓▓▓▓▓▓▓▓ productive ~30%

▓▓▓▓▓ boring ~20%

▓▓ canceled ~10%No single word dominates. The probability is genuinely spread across multiple reasonable completions. Your brain offered you a menu because the phrase itself doesn’t contain enough context to narrow it down.

This is exactly what AI does with ambiguous prompts — it picks from a distribution. Which word wins depends on subtle contextual signals you may not have consciously included. Change one word in your prompt and you shift which option wins entirely.

This is also why vague prompts produce inconsistent results. When the probability is spread thin, small things tip the balance. The model isn’t being random — it’s being statistically faithful to an ambiguous input. You gave it a Round 2 prompt and got a Round 2 answer. Garbage in, garbage out has never been more literal.

Round 3: Wide Open

The future of work is _____.

Innovation requires _____.

Artificial intelligence will _____.

Now look at what the distribution does:

The future of work is...

▓▓▓ hybrid ~12%

▓▓ collaborative ~9%

▓▓ changing ~8%

▓▓ uncertain ~7%

▓ automated ~6%

▓ evolving ~5%

...and dozens moreNo word breaks 15%. The probability is scattered so widely that the model — or your brain — could land almost anywhere and still be making a statistically defensible choice.

This is why open-ended prompts produce wildly different results every time. And why “just ask it a question” is such useless advice — the shape of your prompt determines the shape of the probability distribution. A narrow, specific prompt produces a narrow, predictable output. A wide, vague prompt produces a wide, unpredictable one.

The model isn’t being creative in Round 3. It’s being uncertain — and outputting that uncertainty as apparent variety. That distinction matters more than it sounds. Creativity implies intention. Uncertainty is just math.

The Reveal

Here’s what you just did across those three rounds:

You assigned probability weights to possible next words based on patterns you’ve absorbed over a lifetime of reading and listening. You didn’t calculate anything consciously — your brain ran the numbers automatically and surfaced the winner.

That is the complete description of how a large language model works.

Trained on billions of words. Learned which completions follow which phrases, with what frequency, in what contexts. When you type a prompt, it runs the same process — across a vocabulary of 50,000+ words, billions of learned patterns, in milliseconds.

In the last post I said: AI does not know anything. It predicts language patterns. You just spent three rounds doing exactly that. The only difference between you and the model is scale and speed — not mechanism.

Round 1 prompt → narrow distribution → confident, consistent output. Round 3 prompt → wide distribution → variable, unpredictable output.

The difference isn’t the model. It’s the probability shape your prompt creates.

One Last Thing

Go back to that first phrase: peanut butter and _____.

Now read this: peanut butter and chairs.

Something happened just now. A small, immediate wrongness — a cognitive speed bump that arrived before you’d consciously processed why.

Peanut butter and...

▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓▓ jelly ~95%

chairs ~0.001%Your brain flagged it instantly because you’ve internalized language patterns deeply enough to feel when something violates them. No analysis required. You just knew.

AI does not have that.

If the model generates a wrong fact, a made-up citation, or a statistic nobody ever verified — it produces it with exactly the same confidence as jelly. No alarm. No hesitation. A low-probability completion feels, to the model, identical to a high-probability one.

The fluency stays constant. The confidence stays constant. The wrongness is invisible — to the model.

Not to you.

That instinct — the one that felt chairs before you could explain why — is what you bring to this collaboration. The model handles the probability at scale. You handle the knowing-when-something-is-off.

That’s not a minor role. That’s the whole game.

(Not convinced yet? Here's a 60-second video from 3Blue1Brown on Youtube showing exactly what we just did — word prediction in action.)

SAM is an AI-powered, human-guided resource for people who want to actually use AI — without the hype, the panic, or the CS degree. Next up: AI, ML, Generative AI — why these aren’t the same thing, and why the difference actually matters.